This is the fourth article in the OWASP Top 10 Series

Overview

At time of this writing Sensitive Data Exposure is the number 3 risk of the OWASP Top 10. As in the rest of cases of this OWASP series, we are going to scope content and samples of this article to web applications developed under .NET technologies (ASP.NET MVC, ASP.NET WF, ASP.NET Core, WebAPI, WCF, EF, etc…). This is, in fact, a wide broad risk which now include other related previous OWASP risks like Insecure Transport Layer and Bad Cryptography and which is also closed related with another existing ones like Security Misconfiguration and Session Management etc…

Sensitive Data Exposure has passed from number #6 to number #3 risk at the last revision of OWASP Top 10 (2017).

Typical relative recent example of Sensitive Data Exposure risk was Equifax breach in 2017, in which sensitive data of more than 143 millions of Americans were exposed (near of 50% of the entire EEUU population). Social security numbers, driver´s licences, addresses and even credit card numbers were exposed…the economic cost for Equifax was near to 500$ million.

Sensitive Data Exposure Explained

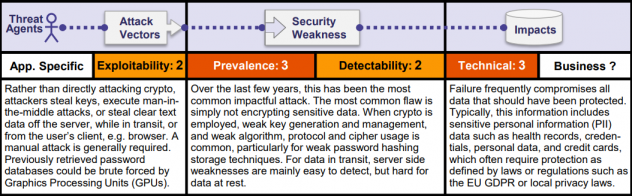

In terms of Threat Agents, attackers are usually external agents which steal keys or clear text data from servers or from user browsers (cookies). Attackers can also make use of MIM (man in the middle) attacks over insecure data on transit and some of them use specific brute force techniques for breaking weak crypto algorithms. The exploitability is classified as difficult (be able to monitor traffic over network tends to be more difficult than exploit a sql injection vulnerability). From a general perspective, over the last few years, the prevalence has grown significantly; this can be due to not only the lack of encrypting sensitive data but also the use of weak protocols, crypto-algorithms and even weak key generation, it worth to mention that this increase in prevalence has to do also with existing systems like Equifax . Detectability is currently classified as average, for instance for data in transit over insecure http are easy to detect than data in server. Technical Impact falls into severe category, once a breach has been exploited sensitive data is exposed this can affect to credit cards, credentials, personal data like health information.

From the OWASP document we see:

Sources for Sensitive Data Exposure

In order to explain this risk we can analyse three different categories at which we can found risk for sensitive data.

Insufficient Secure Transport Protection (No proper TLS)

Here we consider both issues: insufficient use of SSL as also the lack of SSL (TLS). Under this assumption let´s review three scenarios:

- Credentials over secure connection: Needless to say that we must post user credentials over secure connection, this is clear, but we need also to load the login page over secure connection , if not an attacker may be able to intercept the communication and manipulate the payload, posting this credentials to attacker´s site.

- Cookies have also to be protected over secure connection. If not the session can be hijacked and attacker becomes into the user, as we have seen at previous article about Session Management basics.

- Mixed Mode working mode: We can still see website running over this mode which constitutes another example of bad practice for SSL. This is activated for allowing https pages to load embedded resources like javascript files or css over http. This is not a trivial issue because if an attacker can modify javascript files then it will have control over DOM structure.

Note: About SSL vulnerabilities: “The POODLE attack (which stands for “Padding Oracle On Downgraded Legacy Encryption”) is a man-in-the-middle exploit which takes advantage of Internet and security software clients’ fallback to SSL 3.0. … On December 8, 2014 a variation of the POODLE vulnerability that affected TLS was announced.”

“The concepts of SSL (Secure Socket Layer) and TSL (Transport Layer Security) are often used interchangeably. SSL v3.1 is equivalent to TLS v1.0, but different versions of SSL and TLS are supported by modern web browsers and frameworks”

Bad Cryptography Policies

For explaining this topic with proper detail I´ll provide a specific set of articles inside OWASP series, by now we will review three common scenarios in which the bad use of cryptography creates vulnerabilities related with sensitive data:

- Password Storage: Sometimes we can see systems in which passwords are stored in plain text, needless to say that this goes against defence in depth principles and even against common sense. In other situations we can also see password stored by using symmetric encryption, which is also considered a bad practice, because if the system is compromised and the key are obtained then all the passwords are also discovered. The recommendation is clear : use strong one way hashing algorithm for password storage.

- Weak Keys Protection: Recommendation here is clear: don´t store your keys in configuration files. If you are using symmetric encryption then you need to protect your keys, because they are the unique line of defence of your cyphers, if your keys are compromised then all encryption becomes useless. Sometimes asymmetric encryption is used for encrypting your symmetric keys providing a high level of security.

- Weak Encryption Algorithms: In light of the latest incidents, we have seen that some of the encryption algorithms we used in the past are clearly insufficient. Sometime this weakness comes from poor generated keys (insufficient length), padding modes, etc…

General Exposure Risks

There are other general risks that OWASPT talk about like the following:

- Sensitive Data at URL: Many applications pass sensitive data at url, for instance as we have seen at OWASP TOP 2 article for Session management and broken authentication, applications can be configured for persisting session id at url. As a rule of thumb, never persist sensitive data at url, event in the case yur are using https.

- Browser Auto-Complete: if you have activated autocomplete in your browser changes are that sensitive data will be persisted there. Image the consequences if you have credit card info persisted in your browser and someone else have access to your computer.

- Protect the access to your logs: Logs files tend to contain sensitive data, some applications do not properly protect the access to their log, and so sensitive data can be leaked from there.

Defences & Recommendations

As we have seen, an operational perpective, Sensitive Data Exposure is a wide broad topic, general recommendations are the following ones:

- Avoid to persist sensitive data whenever possible and reduce windows of storage

- Make sure you protect your sensitive data with robust encryption.

- Avoid as far as possible to store sensitive data into urls, because urls tend to be cached at routing servers, stored at log files an even shared across social media, so avoid persisting sensitive data here.

- Disable caching into your application for responses which contain sensitive data.

- Make correct use https for you applications and encrypt all data in transit with secure protocols like TLS with perfect forward secrecy cyphers and secure parameters. Enable your application to force encryption using directives like HSTS.

- Use TLS, as SSL is no longer considered usable for security.

- All your pages should be served over HTTPS. This includes css, javascripts, images, AJAX requests, POST data and all third parties includes. Otherwise breaches will be created for man-in-the-middle attacks.

- Mark your cookies as Secure whenever possible: a secure cookie is only sent to the server over https protocol under encrypted request.

- Make use of strong hashing algorithms specially designed for passwords when storing them (strong adaptive and salted hashing functions).

- Define a secure policy for Key management when dealing with symmetric encryption.

Improving defences

Implementing previous recommendations is the basis for defence in depth, so at this point let´s say that there are two big sections in which we can classify breaches involving sensitive data: Data in Transit, and Data at Rest (in storage). Each of them requires different protection techniques so preventing this risk is sometimes difficult and complex because is not just a matter of robustly encrypting data, because all the business logic around sensitive data need also to be secure which is not always the case. For all of this logic Hdiv RASP solution provides coverage for business logic flaws. As we commented in previous post RASP stands for Runtime Application Self Protection which allows applications to protect themselves. I strongly recommend Hdiv RASP solution for integrating first level of protection.

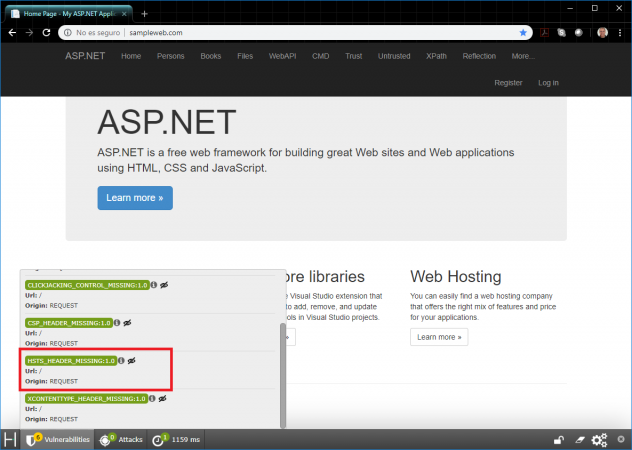

In the same way It could also be helpful to use tools like Hdiv .NET which helps to identify some Sensitive Data Exposure vulnerabilities like HSTS. That is to say, if your application has or nor force encryption using directives like HSTS,etc… Following screenshot some a little sample in action: